The Crowdsourced Replication Initiative: Excerpts from the Executive Report

[Excerpts taken from the report “The Crowdsourced Replication Initiative: Investigating Immigration and Social Policy Preferences using Meta-Science” by Nate Breznau, Eike Mark Rinke, and Alexander Wuttke, posted at SocArXiv Papers]

“A burning question is what impact immigration and immigration-related events have on policy and social solidarity…This project employs crowdsourcing methods to address these issues.”

“This executive report provides short reviews of the area of social policy preferences and immigration, and the methods and impetus behind crowdsourcing. It details the methodological process we followed conducting the CRI and provides some descriptive statistics.”

“The project has three planned papers as follows. Paper I will include all participant co-authors; II and III just the PIs. Readers may follow the progress of these papers and find all details about the CRI on its Open Science Framework (OSF) project page.”

“I. “Does Immigration Undermine Social Policy? A Crowdsourced Re-Investigation of Public Preferences across Mass Migration Destinations”. The main findings of the project presenting a replication and extension based on original research of Brady and Finnigan (2014) titled, ‘Does Immigration Undermine Social Policy Preferences?’.”

“Given the sensitivity of small-N macro-comparative studies and that researchers might report only those models out of the thousands that gave the results they sought, reliance on a single replication study (or the original), even if it is deemed to perfectly reflect the underlying causal theory, is a flimsy means for concluding whether a study is verifiable. With a pool of researchers independently replicating a study we develop far more confidence in the results.”

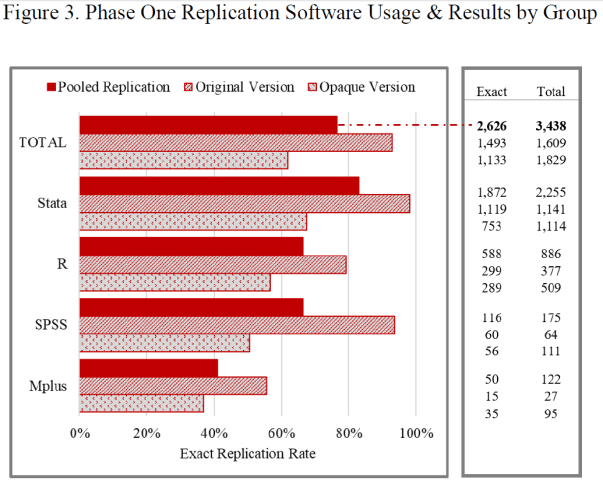

“We hypothesize that there is researcher variability in replications even when the original study is fully transparent…To do this we randomly divided our researcher sample into an original group and an opaque group. The original received all materials including code from the original study while the opaque group received an anonymized version of the study, with only descriptive rather than numeric results and no code.”

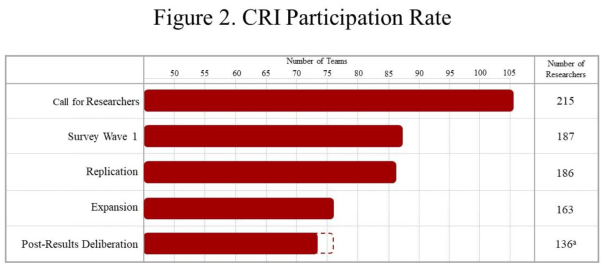

“We had 216 researchers in 106 teams from 26 countries in 5 continents respond to our call for researchers as of its closing on July 27th, 2018…Figure 2. Gives the rates of participation throughout the course of the CRI.”

“The call for researchers promised co-authorship on the final published paper, similar to the Silberzahn et al (2018) study. For us this was an essential component to reduce if not remove publication or findings biases. We wanted to be clear that simply doing the tasks we assigned qualified them as equal co-researchers and co-authors, they needed not produce anything special, significant or ‘groundbreaking’, only solid work.”

“In the first group, labeled original version, we assign teams to assess the verifiability of the prominent B&F macro-comparative study. This group has minimal research design decisions to make, theoretically none…As the original study is very transparent with the authors sharing their analytical code (for the statistical software Stata) and country-level data, it is a least-likely case to find variation in outcomes.”

“We gave the second group a slightly artificial treatment. They replicate a derivation of the B&F study altered by us to render it anonymous and less transparent, labeled opaque version…offering them limited information about the original study, forcing them to make ‘tougher’ choices in how to replicate it…To do this we re-worded the study, gave no numeric results and provided no code to the replicators in this experimental group.”

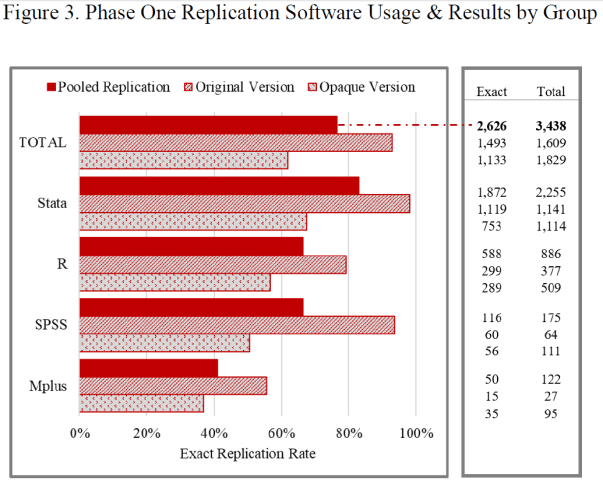

“Replicators reported odds-ratios following the estimates reported by B&F…We considered an odds-ratio to be an “exact” replication if it was within <0.01 of the original effect; this allows for rounding error. Figure 3 reports the ratio of exact replications by usage of software types and experimental group.”

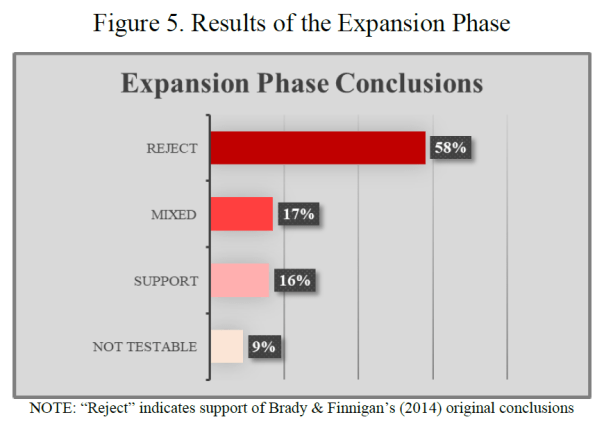

“The expansion phase required teams to think about the B&F models and determine if they thought of improvements or alternatives. The idea was to challenge researchers to think about the data-generating model, or plausible alternatives…”

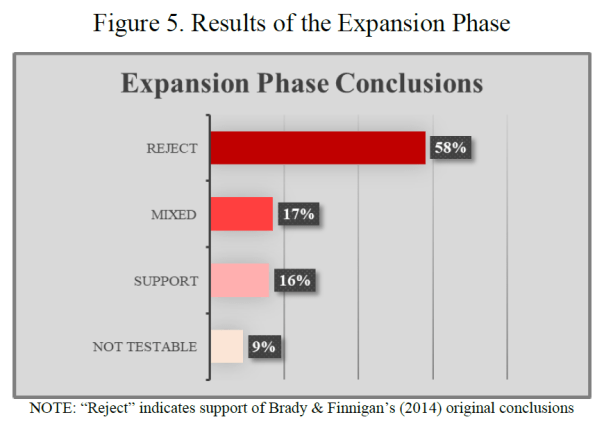

“…we asked each team for a subjective conclusion: “We ask that you provide a substantive conclusion based on your test of the hypothesis that a greater stock or a greater increase in the stock of foreign persons in a given society leads the general public to become less supportive of social policy…” Figure 5 offers the subjective conclusions of the researchers drawn based on their expansion analyses.”

“Our main conclusions will arrive in the three working papers we outlined in section 1.3. Here we provided only an overview.”

“We can say with some certainty that the original study of Brady and Finnigan (2014) is verifiable in our replications. The expansions also suggest that their conclusions are robust to a multiverse of data set up and range of alternative model specifications. This suggests there is not a ‘big picture’ finding that immigration erodes popular support for social policy or the welfare state as a whole. However, our findings cast enough suspicion into the equation that further scrutiny is necessary at this big picture level.”

To read the full report, click here.

You must be logged in to post a comment.