One Question + Many Researchers = Many Different Answers

[Excerpts taken from the article “Crowdsourcing hypothesis tests: Making transparent how design choices shape research results” by Justin Landy and many others, posted at the preprint repository at the University of Essex]

“…we introduce a crowdsourced approach to hypothesis testing. In the crowdsourcing initiative reported here, up to 13 research teams (out of a total of 15 teams) independently created stimuli to address the same five research questions, while fully blind to one another’s approaches, and to the original methods and the direction of the original results.”

“The original hypotheses, which were all unpublished at the time the project began, dealt with topics including moral judgment, negotiations, and implicit cognition.”

“Rather than varying features of the same basic design…we had different researchers design distinct studies to test the same research questions…”

“The five research questions were gathered by emailing colleagues conducting research in the area of moral judgment and asking if they had initial evidence for an effect that they would like to volunteer for crowdsourced testing by other research groups.”

“We identified five directional hypotheses in the areas of moral judgment, negotiation, and implicit cognition, each of which had been supported by one then unpublished study.”

“A subset of the project coordinators…recruited 15 teams of researchers through their professional networks to independently design materials to test each hypothesis.”

“…materials designers were provided with the nondirectional versions of the five hypotheses presented in Table 1, and developed materials to test each hypothesis independently of the other teams.”

“…our primary focus is on dispersion in effect sizes across different study designs.”

“The diversity in effect size estimates from different study designs created to test the same theoretical ideas constitute the primary output of this project. For Hypotheses 1-4, the effect sizes were independent-groups Cohen’s ds, and for Hypothesis 5, they were Pearson rs.”

“Effect size estimates…were calculated… using…random-effects meta-analyses…This model treats each observed effect size yi as a function of the average true effect size μ, between-study variability, ui ∼ N(0, τ2), and sampling error, ei ∼ N(0, vi)…yi = μ + ui + ei.”

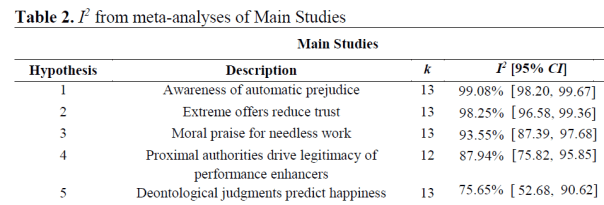

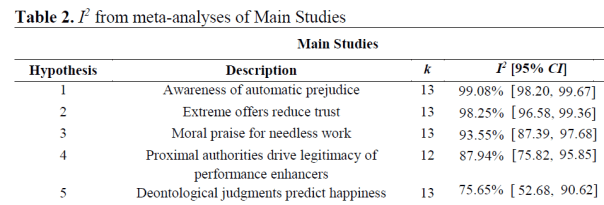

“The I2 statistic quantifies the percentage of variance among effect sizes attributable to heterogeneity, rather than sampling variance. By convention, I2 values of 25%, 50%, and 75% indicate low, moderate, and high levels of unexplained heterogeneity, respectively.”

“All five hypotheses showed statistically significant and high levels of heterogeneity… The vast majority of observed variance across effect sizes in both studies is unexplained heterogeneity.”

Discussion

“How contingent is support for scientific hypotheses on the subjective choices that researchers make when designing studies?… In this crowdsourced project, when up to 13 independent research teams designed their own studies to test five original research questions, variability in observed effect sizes proved dramatic…”

“…different research teams designed studies that returned statistically significant effects in opposing directions for the same research question for four out of five hypotheses in the Main Studies…”

“Even the most consistently supported original hypotheses still exhibited a wide range of effect sizes, with the smallest range being d = -0.37 to d = 0.26…”

“…a number of aspects of our approach may have led to artificial homogeneity in study designs.”

“In particular, materials designers were restricted to creating simple experiments with a self-reported dependent measure that could be run online in five minutes or less.”

“Further, the key statistical test of the hypothesis had to be a simple comparison between two conditions (for Hypotheses 1-4), or a Pearson correlation (for Hypothesis 5).”

“Full thirty-minute- to hour-long-laboratory paradigms with factorial designs, research confederates, and more complex manipulations and outcome measures (e.g., behavioral measures) contain far more researcher choice points and may be associated with even greater heterogeneity in research results…”

“…one cannot generalize the present results to all hypotheses in all subfields…further initiatives to crowdsource hypothesis tests are needed before drawing definitive conclusions about the impact of subjective researcher choices on empirical outcomes.”

To read the article, click here.

You must be logged in to post a comment.