[Excerpts are taken from the article “Investigating the replicability of preclinical cancer biology” by Errington et al., published in eLife.]

“Large-scale replication studies in the social and behavioral sciences provide evidence of replicability challenges (Camerer et al., 2016; Camerer et al., 2018; Ebersole et al., 2016; Ebersole et al., 2020; Klein et al., 2014; Klein et al., 2018; Open Science Collaboration, 2015).”

“In psychology, across 307 systematic replications and multisite replications, 64% reported statistically significant evidence in the same direction and effect sizes 68% as large as the original experiments (Nosek et al., 2021).”

“In the biomedical sciences, the ALS Therapy Development Institute observed no effectiveness of more than 100 potential drugs in a mouse model in which prior research reported effectiveness in slowing down disease, and eight of those compounds were tried and failed in clinical trials costing millions and involving thousands of participants (Perrin, 2014).”

“Of 12 replications of preclinical spinal cord injury research in the FORE-SCI program, only two clearly replicated the original findings – one under constrained conditions of the injury and the other much more weakly than the original (Steward et al., 2012).”

“And, in cancer biology and related fields, two drug companies (Bayer and Amgen) reported failures to replicate findings from promising studies that could have led to new therapies (Prinz et al., 2011; Begley and Ellis, 2012). Their success rates (25% for the Bayer report, and 11% for the Amgen report) provided disquieting initial evidence that preclinical research may be much less replicable than recognized.”

“In the Reproducibility Project: Cancer Biology, we sought to acquire evidence about the replicability of preclinical research in cancer biology by repeating selected experiments from 53 high-impact papers published in 2010, 2011, and 2012 (Errington et al., 2014). We describe in a companion paper (Errington et al., 2021b) the challenges we encountered while repeating these experiments. … These challenges meant that we only completed 50 of the 193 experiments (26%) we planned to repeat. The 50 experiments that we were able to complete included a total of 158 effects that could be compared with the same effects in the original paper.”

“There is no single method for assessing the success or failure of replication attempts (Mathur and VanderWeele, 2019; Open Science Collaboration, 2015; Valentine et al., 2011), so we used seven different methods to compare the effect reported in the original paper and the effect observed in the replication attempt…”

“…136 of the 158 effects (86%) reported in the original papers were positive effects – the original authors interpreted their data as showing that a relationship between variables existed or that an intervention had an impact on the biological system being studied. The other 22 (14%) were null effects – the original authors interpreted their data as not showing evidence for a meaningful relationship or impact of an intervention.”

“Furthermore, 117 of the effects reported in the original papers (74%) were supported by a numerical result (such as graphs of quantified data or statistical tests), and 41 (26%) were supported by a representative image or similar. For effects where the original paper reported a numerical result for a positive effect, it was possible to use all seven methods of comparison. However, for cases where the original paper relied on a representative image (without a numerical result) as evidence for a positive effect, or when the original paper reported a null effect, it was not possible to use all seven methods.”

Summarizing replications across five criteria

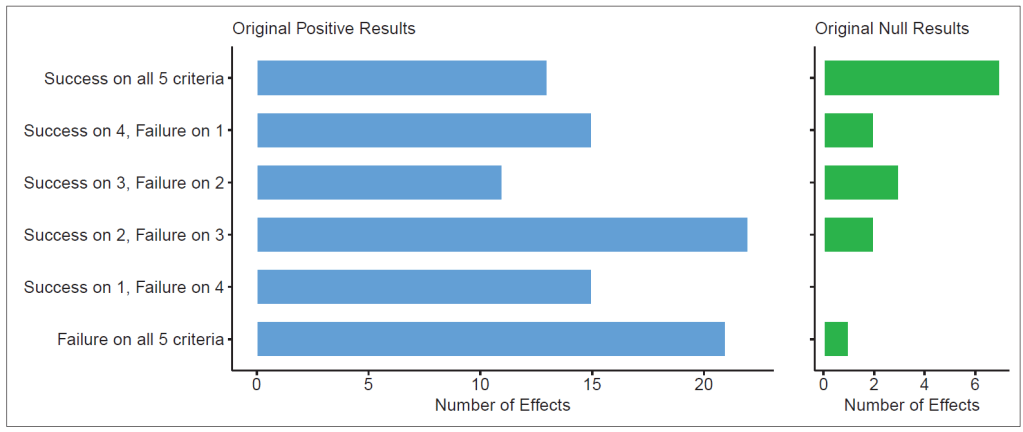

“To provide an overall picture, we combined the replication rates by five of these criteria, selecting criteria that could be meaningfully applied to both positive and null effects … The five criteria were: (i) direction and statistical significance (p < 0.05); (ii) original effect size in replication 95% confidence interval; (iii) replication effect size in original 95% confidence interval; (iv) replication effect size in original 95% prediction interval; (v) meta-analysis combining original and replication effect sizes is statistically significant (p < 0.05).”

“For replications of original positive effects, 13 of 97 (13%) replications succeeded on all five criteria, 15 succeeded on four, 11 succeeded on three, 22 failed on three, 15 failed on four, and 21 (22%) failed on all five (… Figure 6).”

“For original null effects, 7 of 15 (47%) replications succeeded on all five criteria, 2 succeeded on four, 3 succeeded on three, 0 failed on three, 2 failed on four, and 1 (7%) failed on all five.”

“… Combining positive and null effects, 51 of 112 (46%) replications succeeded on more criteria than they failed, and 61 (54%) replications failed on more criteria than they succeeded.”

“We explored five candidate moderators of replication success and did not find strong evidence to indicate that any of them account for variation in replication rates we observed in our sample. The clearest indicator of replication success was that smaller effects were less likely to replicate than larger effects … Research into replicability in other disciplines has also found that findings with stronger initial evidence (such as larger effect sizes and/or smaller p-values) is more likely to replicate (Nosek et al., 2021; Open Science Collaboration, 2015).”

“The present study provides substantial evidence about the replicability of findings in a sample of high-impact papers published in the field of cancer biology in 2010, 2011, and 2012. The evidence suggests that replicability is lower than one might expect of the published literature. Causes of non-replicability could be due to factors in conducting and reporting the original research, conducting the replication experiments, or the complexity of the phenomena being studied. The present evidence cannot parse between these possibilities…”

“Stakeholders from across the research community have been raising concerns and generating evidence about dysfunctional incentives and research practices that could slow the pace of discovery. This paper is just one contribution to the community’s self-critical examination of its own practices.”

“Science pursuing and exposing its own flaws is just science being science. Science is trustworthy because it does not trust itself. Science earns that trustworthiness through publicly, transparently, and continuously seeking out and eradicating error in its own culture, methods, and findings. Increasing awareness and evidence of the deleterious effects of reward structures and research practices will spur one of science’s greatest strengths, self-correction.”

To read the full article, click here.

References

Begley CG, Ellis LM. 2012. Drug development: Raise standards for preclinical cancer research. Nature 483:531–533.

Camerer C. F, Dreber A, Forsell E, Ho TH, Huber J, Johannesson M, Kirchler M, Almenberg J, Altmejd A, Chan T, Heikensten E, Holzmeister F, Imai T, Isaksson S, Nave G, Pfeiffer T, Razen M, Wu H. 2016. Evaluating replicability of laboratory experiments in economics. Science 351: 1433–1436.

Camerer CF, Dreber A, Holzmeister F, Ho T-H, Huber J, Johannesson M, Kirchler M, Nave G, Nosek BA, Pfeiffer T, Altmejd A, Buttrick N, Chan T, Chen Y, Forsell E, Gampa A, Heikensten E, Hummer L, Imai T, Isaksson S, et al. 2018. Evaluating the replicability of social science experiments in Nature and Science between 2010 and 2015. Nature Human Behaviour 2: 637–644.

Ebersole CR, Mathur MB, Baranski E, Bart-Plange D-J, Buttrick NR, Chartier CR, Corker KS, Corley M, Hartshorne JK, IJzerman H, Lazarević LB, Rabagliati H, Ropovik I, Aczel B, Aeschbach LF, Andrighetto L, Arnal JD, Arrow H, Babincak P, Bakos BE, et al. 2020. Many Labs 5: Testing Pre-Data-Collection Peer Review as an Intervention to Increase Replicability. Advances in Methods and Practices in Psychological Science 3:309–331.

Ebersole CR, Atherton OE, Belanger AL, Skulborstad HM, Allen JM, Banks JB, Baranski E, Bernstein MJ, Bonfiglio DBV, Boucher L, Brown ER, Budiman NI, Cairo AH, Capaldi CA, Chartier CR, Chung JM, Cicero DC, Coleman JA, Conway JG, Davis WE, et al. 2016. Many Labs 3: Evaluating participant pool quality across the academic semester via replication. Journal of Experimental Social Psychology 67: 68–82.

Errington TM, Iorns E, Gunn W, Tan FE, Lomax J, Nosek BA. 2014. An open investigation of the reproducibility of cancer biology research. eLife 3: e04333.

Errington TM, Denis A, Perfito N, Iorns E, Nosek BA. 2021b. Challenges for assessing replicability in preclinical cancer biology. eLife 10: e67995.

Klein RA, Ratliff KA, Vianello M, Adams RB, Bahník Š, Bernstein MJ, Bocian K, Brandt MJ, Brooks B, Brumbaugh CC, Cemalcilar Z, Chandler J, Cheong W, Davis WE, Devos T, Eisner M, Frankowska N, Furrow D, Galliani EM, Nosek BA. 2014. Investigating Variation in Replicability: A “Many Labs” Replication Project. Social Psychology 45: 142–152.

Klein RA, Vianello M, Hasselman F, Adams BG, Adams RB, Alper S, Aveyard M, Axt JR, Babalola MT, Bahník Š, Batra R, Berkics M, Bernstein MJ, Berry DR, Bialobrzeska O, Binan ED, Bocian K, Brandt MJ, Busching R, Rédei AC, et al. 2018. Many Labs 2: Investigating Variation in Replicability Across Samples and Settings. Advances in Methods and Practices in Psychological Science 1: 443–490.

Mathur MB, VanderWeele TJ. 2019. Challenges and suggestions for defining replication “success” when effects may be heterogeneous: Comment on Hedges and Schauer (2019). Psychological Methods 24: 571–575.

Nosek BA, Hardwicke TE, Moshontz H, Allard A, Corker KS, Dreber A, Fidler F, Hilgard J, Struhl MK, Nuijten MB, Rohrer JM, Romero F, Scheel AM, Scherer LD, Schönbrodt FD, Vazire S. 2021. Replicability, Robustness, and Reproducibility in Psychological Science. Annual Review of Psychology 73: 114157.

Open Science Collaboration. 2015. Estimating the reproducibility of psychological science. Science 349:aac4716.

Perrin S. 2014. Preclinical research: Make mouse studies work. Nature 507: 423–425.

Prinz F, Schlange T, Asadullah K. 2011. Believe it or not: how much can we rely on published data on potential drug targets? Nature Reviews Drug Discovery 10: 712.

Steward O, Popovich PG, Dietrich WD, Kleitman N. 2012. Replication and reproducibility in spinal cord injury research. Experimental Neurology 233: 597–605.

Valentine JC, Biglan A, Boruch RF, Castro FG, Collins LM, Flay BR, Kellam S, Mościcki EK, Schinke SP. 2011. Replication in prevention science. Prevention Science 12: 103–117.

You must be logged in to post a comment.