This blog is based on the book of the same name by Norbert Hirschauer, Sven Grüner, and Oliver Mußhoff that was published in SpringerBriefs in Applied Statistics and Econometrics in August 2022. Starting from the premise that a lacking understanding of the probabilistic foundations of statistical inference is responsible for the inferential errors associated with the conventional routine of null-hypothesis-significance-testing (NHST), the book provides readers with an effective intuition and conceptual understanding of statistical inference. It is a resource for statistical practitioners who are confronted with the methodological debate about the drawbacks of “significance testing” but do not know what to do instead. It is also targeted at scientists who have a genuine methodological interest in the statistical reform debate.

I. BACKGROUND

Data-based scientific propositions about the world are extremely important for sound decision-making in organizations and society as a whole. Think of climate change or the Covid-19 pandemic with questions such as of how face masks, vaccines or restrictions on people’s movements work. That said, it becomes clear that the debate on p-values and statistical significance tests addresses probably the most fundamental question of the data-based sciences: How can we learn from data and come to the most reasonable belief (proposition) regarding a real-world state of interest given the available evidence (data) and the remaining uncertainty? Answering that question and understanding when and how statistical measures (i.e., summary statistics of the given dataset) can help us evaluate the knowledge gain that can be obtained from a particular sample of data is extremely important in any field of science.

In 2016, the American Statistical Association (ASA) issued an unprecedented methodological warning on p-values that set out what p-values are, and what they can and can’t tell us. It also contained a clear statement that, despite the delusive “hypothesis-testing” terminology of conventional statistical routines, p-values can neither be used to determine whether a hypothesis is true nor whether a finding is important. Against a background of persistent inferential errors associated with significance testing, the ASA felt compelled to pursue the issue further. In October 2017, it organized a symposium on the future of statistical inference whose major outcome was a special issue “Statistical Inference in the 21st Century: A World Beyond p < 0.05” in The American Statistician. Expressing their hope that this special issue would lead to a major rethink of statistical inference, the guest editors concluded that it is time to stop using the term “statistically significant” entirely. Almost simultaneously, a widely supported call to retire statistical significance was published in Nature.

Empirically working economists might be perplexed by this fundamental debate. They are usually not trained statisticians but statistical practitioners. As such, they have a keen interest in their respective field of research but “only apply statistics” – usually by following the unquestioned routine of reporting p-values and asterisks. Due to the thousands of critical papers that have been written over the last decades, even most statistical practitioners will, by now, be aware of the severe criticism of NHST-practices that many used to follow much like an automatic routine. Nonetheless, all those without a methodological bent in statistics – and this is likely to be the majority of empirical researchers – are likely to be puzzled and ask the question: What is going on here and what should I do now?

While the debate is highly visible now, many empirical researchers are likely to ignore that fundamental criticism of NHST have been voiced for decades – basically ever since significance testing became the standard routine in the 1950s (see Key References below).

KEY REFERENCES: Reforming statistical practices

2022 – Why and how we should join the shift from significance testing to estimation: Journal of Evolutionary Biology (Berner and Amrhein)

2019 – Embracing uncertainty: The days of statistical significance are numbered: Pediatric Anesthesia (Davidson)

2019 – Call to retire statistical significance: Nature (Amrhein et al.)

2019 – Special issue editorial: “[I]t is time to stop using the term ‘statistically significant’ entirely. Nor should variants such as ‘significantly different,’ ‘p < 0.05,’ and ‘nonsignificant’ survive, […].” The American Statistician (Wasserstein et al.)

2018 – Statistical Rituals: The Replication Delusion and How We Got There: Advances in Methods and Practices in Psychological Science (Gigerenzer)

2017 – ASA symposium on “Statistical Inference in the 21st Century: A World Beyond p < 0.05”

2016 – ASA warning: “The p-value can neither be used to determine whether a scientific hypothesis is true nor whether a finding is important.” The American Statistician (Wasserstein and Lazar)

2016 – Statistical tests, P values, confidence intervals, and power: a guide to misinterpretations: European Journal of Epidemiology (Greenland et al.)

2015 – Editorial ban on using NHST: Basic and Applied Social Psychology (Trafimow and Marks)

≈ 2015 – Change of reporting standards in journal guidelines: “Do not use asterisks to denote significance of estimation results. Report the standard errors in parentheses.” American Economic Review

2014 – The Statistical Crisis in Science: American Scientist (Gelman and Loken)

2011 – The Cult of Statistical Significance – What Economists Should and Should not Do to Make their Data Talk: Schmollers Jahrbuch (Krämer)

2008 – The Cult of Statistical Significance. How the Standard Error Costs Us Jobs, Justice, and Lives: University of Michigan Press (Ziliak and McCloskey).

2007 – Statistical Significance and the Dichotomization of Evidence: Journal of the American Statistical Association (McShane and Gal)

2004 – The Null Ritual: What You Always Wanted to Know About Significance Testing but Were Afraid to Ask: SAGE handbook of quantitative methodology for the social sciences (Gigerenzer et al.)

2000 – Null hypothesis significance testing: A review of an old and continuing controversy: Psychological Methods (Nickerson)

1996 – A task force on statistical inference of the American Psychological Association dealt with calls for banning p-values but rejected the idea as too extreme: American Psychologist (Wilkinson and Taskforce on Statistical Inference)

1994 – The earth is round (p < 0.05): “[A p-value] does not tell us what we want to know, and we so much want to know what we want to know that, out of desperation, we nevertheless believe that it does!” American Psychologist (Cohen)

…

1964 – How should we reform the teaching of statistics? “[Significance tests] are popular with non-statisticians, who like to feel certainty where no certainty exists.” Journal of the Royal Statistical Society (Yates and Healy)

1960 – The fallacy of the null-hypothesis significance test: Psychological Bulletin (Rozeboom)

1959 – Publication Decisions and Their Possible Effects on Inferences Drawn from Tests of Significance–Or Vice Versa: Journal of the American Statistical Association (Sterling)

1951 – The Influence of Statistical Methods for Research Workers on the Development of […] Statistics: Journal of the American Statistical Association (Yates)

Already back in the 1950s, scientists started expressing severe criticisms of NHST and called for reforms that would shift reporting practices away from the dichotomy of significance tests to the estimation of effect sizes and uncertainty. It is safe to say that the core criticisms and reform suggestions remained largely unchanged over those last seven decades. This is because – unfortunately – the inferential errors that they address have remained the same. Nonetheless, after the intensified debate in the last decade and some institutional-level efforts, such as the revision of author guidelines in some journals, some see signs that a paradigm shift from testing to estimation is finally under way. Unfortunately, however, reforms seem to lag behind in economics compared to many other fields.

II. THE METHODOLOGICAL DEBATE IN A NUTSHELL

The present debate is concerned with the usefulness of p-values and statistical significance declarations for making inferences about a broader context based only on a limited sample of data. Simply put, two crucial questions can be discerned in this debate:

Question 1 – Transforming information: What we can extract – at best – from a sample is an unbiased point estimate (“signal”) of an unknown population effect size (e.g., the relationship between education and income) and an unbiased estimation of the uncertainty (“noise”), caused by random error, of that point estimation (i.e., the standard error). We can, of course, go through various mathematical manipulations. But why should we transform two intelligible and meaningful pieces of information – point estimate and standard error – into a p-value or even a dichotomous significance statement?

Question 2 – Drawing inferences from non-random samples: Statistical inference is based on probability theory and a formal chance model that links a randomly generated dataset to a broader target population. More pointedly, statistical assumptions are empirical commitments and acting as if one obtained data through random sampling does not create a random sample. How should we then make inferences about a larger population in the many cases where there is only a convenience sample, such as a group of haphazardly recruited survey respondents, that researchers could get hold of in one way or the other?

It seems that, given the loss of information and the inferential errors that are associated with the NHST-routine, no convincing answer to the first question can be provided by its advocates. Even worse prospects arise when looking at the second question. Severe assumptions violations as regards data generation are quite common in empirical research and, particularly, in survey-based research. From a logical point of view, using inferential statistical procedures for non-random samples would have to be justified by running sample selection models that remedy selection bias. Alternatively, one would have to postulate that those samples are approximately random samples, which is often a heroic but deceptive assumption. This is evident from the mere fact that other probabilistic sampling designs (e.g., cluster sampling) can lead to standard errors that are several times larger than the default which presumes simple random sampling. Therefore, standard errors and p-values that are just based on a bold assumption of random sampling – contrary to how data were actually collected – are virtually worthless. Or more bluntly: Proceeding with the conventional routine of displaying p-values and statistical significance even when the random sampling assumption is grossly violated is tantamount to pretending to have better evidence than one has. This is a breach of good scientific practice that provokes overconfident generalizations beyond the confines of the sample.

III. WHAT SHOULD WE DO?

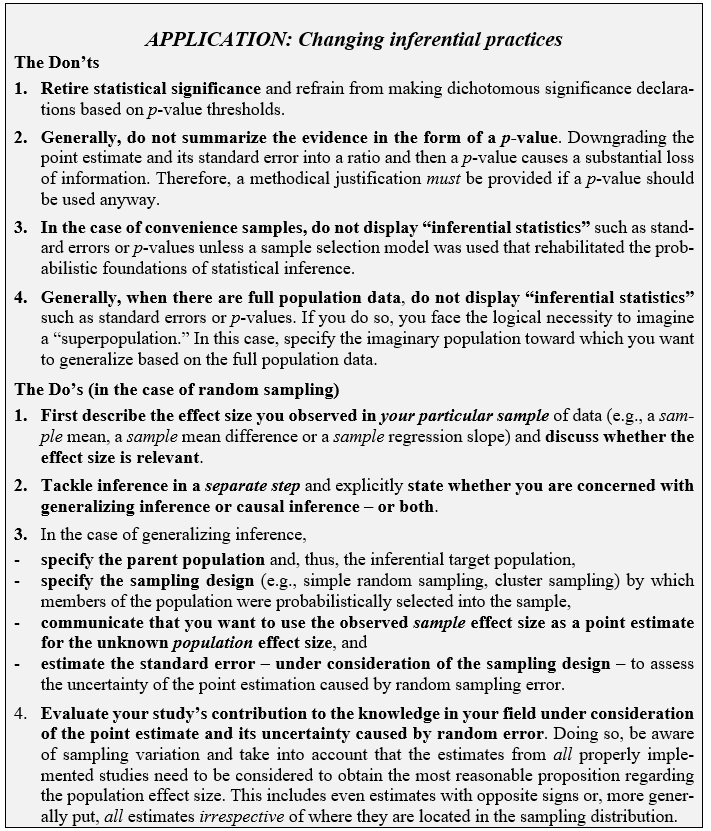

Peer-reviewed journals should be the gatekeepers of good scientific practice because they are key to what is publicly available as body of knowledge. Therefore, the most decisive statistical reform is to revise journal guidelines and include adequate inferential reporting standards. Around 2015, six prestigious economics journals (Econometrica, the American Economic Review, and the four AE Journals) adopted guidelines that require authors to refrain from using asterisks or other symbols to denote statistical significance. Instead, they are asked to report point estimates and standard errors. It seems that it would also make sense for other journals to reform their guidelines based on the understanding that the assumptions regarding random data generation must be met and that, if they are met, reporting point estimates and standard errors is a better summary of the evidence than p-values and statistical significance declarations. In particular, the Don’ts and Do’s listed in the box below should be communicated to authors and reviewers.

Journal guidelines with inferential reporting standards similar to the ones in the box above would have several benefits: (1) They would effectively communicate necessary standards to authors. (2) They would help reviewers assess the credibility of inferential claims. (3) They would provide authors with an effective defense against unqualified reviewer requests. The latter is arguably be even the most important benefit because it would also mitigate publication bias that results from the fact that many reviewers still prefer statistically significant results and pressure researchers to report p-values and “significant novel discoveries” without even taking account of whether data were randomly generated or not.

Despite the previous focus on random sampling, a last comment on inferences from randomized controlled trials (RCTs) seems appropriate: reporting standards similar to those in the box above should also be specified for RCTs. In addition, researchers should be required to communicate that, in RCTs, the standard error deals with the uncertainty caused by “randomization variation.” Therefore, in common settings where the experimental subjects are not randomly drawn from a larger population, the standard error only quantifies the uncertainty associated with the estimation of the sample average treatment effect, i.e., the effect that the treatment produces in the given group of experimental subjects. Only if the randomized experimental subjects have also been randomly sampled from a population, statistical inference can be used as auxiliary tool for making inferences about that population based on the sample. In this case, and only in this case, the adequately estimated standard error can be used to assess the uncertainty associated with the estimation of the population average treatment effect.

Prof. Norbert Hirschauer, Dr. Sven Grüner, and Prof. Oliver Mußhoff are agricultural economists in Halle (Saale) and Göttingen, Germany. The authors are interested in statistical reforms that help shift inferential reporting practices away from the dichotomy of significance tests to the estimation of effect sizes and uncertainty.

You must be logged in to post a comment.