[From the article “Cancer scientists are having trouble replicating groundbreaking research” from the Vox website] “Replication has emerged as a powerful tool to check science and get us closer to the truth. Researchers take an experiment that’s already been done, and test whether its conclusions hold up by reproducing it. The general principle is that if the results repeat, then the original results were correct and reliable. If they don’t, then the first study must be flawed, or its findings false. But there’s a big wrinkle with replication studies: They don’t work like that. As researchers reproduce more experiments, they’re learning that they can’t always get clear answers about the reliability of the original results. Replication, it seems, is a whole lot murkier than we thought.” To read more, click here.

Tyler Cowen, at his blogsite Marginal Revolution — after noting that the four AEA American Economic Journals allow for comments to appear on articles’ official webpage posts, but that this feature is not widely used — makes this statement: “One of the biggest problems with “economics as a science” is that economists themselves cannot usually admit how irrelevant so much of the work — even the quality work — turns out to be.” What if, after all this effort to make research more reproducible, economists don’t bother to take advantage of this and investigate whether previous research is valid? What if, as the title to Cowen’s blog suggests, “who really cares?” (To read Cowen’s post, click here).

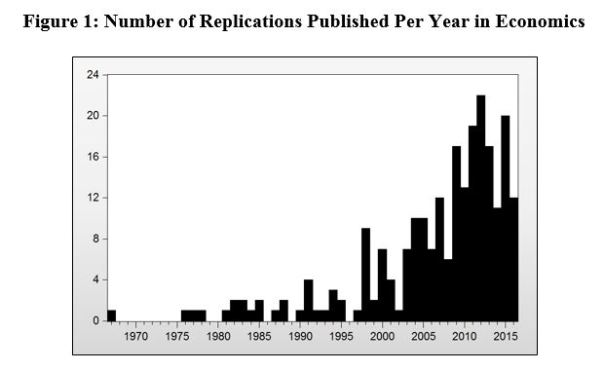

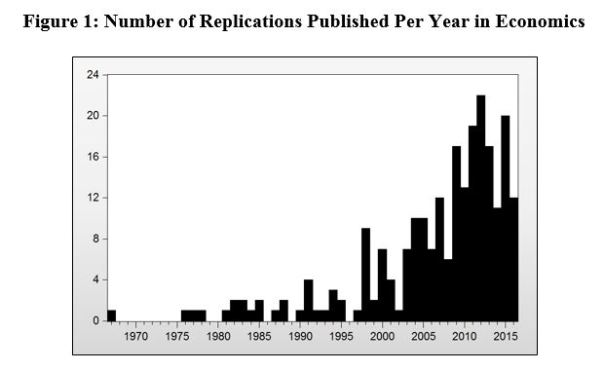

Some evidence in favour of Cowen’s post is provided in a forthcoming AER Papers and Proceedings article by Duvendack, Palmer-Jones and Reed. Using a conservative measure of replications, they find that the number of published replication studies have levelled off in recent years (see below). This despite the fact that opportunities for publishing replications have improved during this time period.

Perhaps, as a recent Guest Blog from Dan Hamermesh suggests, there is plenty of replication activity that is not being picked up. But the question is fair game: Does anybody really care? Revealed preference via replications should be able to provide an answer.

[From the home page of the journal Nature Human Behaviour] “Authors who wish to publish their work with us have the option of a registered report. With this format, acceptance in principle happens before the research outcomes are known. As a result, publication bias is neutralized, as are incentives for practices that undermine the validity of scientific research.” (Click here)

NOTE: This entry is based on, “Replication in Labor Economics: Evidence from Data, and What It Suggests,” American Economic Review, 107 (May 2017)

In Hamermesh (2007) I bemoaned the paucity of “hard-science” style replication in applied economics. I shouldn’t have, as my examination of the citation histories of 10 leading articles in empirical labor economics published between 1990 and 1996 shows. Each selected article had to have been published in a so-called “Top 5” journal and to have accumulated at least 1000 Google Scholar (GS) citations. I examined every publication that the Web of Science had recorded as having cited the work, reading first the abstract and then, if necessary, skimming through the citing paper itself. I classified each citing article by whether it was: 1) Related to; 2) Inspired by; 3) Very similar to but using different data; or 4) A direct replication at least partly using the same data.[1]

The distribution of the over 3000 citing papers in the four categories was: Related, 92.9 percent; inspired, 5.0 percent; similar, 1.5 percent; replicated, 0.6 percent. Replication, even defined somewhat loosely, is fairly rare even of these most highly visible studies. However, 7 of the 10 articles were replicated (coded 3 or 4) at least 5 times, with the remaining 3 replicated 1, 2 and 4 times. Published replications of these most heavily-cited papers are performed, so that one might view the replication glass as 100 percent full.

Replications of most studies, even those appearing in Top 5 journals, are not published, nor should they be: The majority of articles in those journals are (Hamermesh, 2017), essentially ignored, so that the failure to replicate them is unimportant. But the most important studies (judged by market responses) are replicated as they should be—by taking the motivating economic idea and examining its implications using a set of data describing a different time and/or economy. The empirical validity of these ideas, after their relevance is first demonstrated for a particular time and place, can only be usefully replicated at other times and places: If they are general descriptions of behavior, they should hold up beyond their original testing ground. Simple laboratory-style replication is important in catching errors in influential work; but the more important replication goes beyond this and, as I’ve shown, is done.

The evidence suggests that the system is not broken and does not need fixing. But what if one believes that more replication, using mostly the same data as in the original study, is necessary? A bit of history: During the 1960s the American Economic Review was replete with replication-like papers, in the form of Comments (often in the form of replications on the same or other data), Replies and even Rejoinders. For example, in the four regular issues of the 1966 volume, 16 percent of the space went to such contributions. In the first four regular issues of the 2013 volume only 4 percent did, reflecting a change that began by the 1980s. Editors shifted away from cluttering the Review’s pages with Comments, etc., perhaps reflecting their desire to maximize its impact on the profession in light of their realization that pages devoted to this type of exercise generate little attention from other authors (Whaples, 2006).

We have had replications or approximations thereof in the past, but the market for scholarship—as indicated by their impact—has exhibited little interest in them. And we still publish replications, but, as I have shown, in the more appropriate and worthwhile form of examinations of data from other times and places. Overall the evidence suggests that the system is not broken and does not need fixing; and that the most obvious way of fixing this unbroken system has already been rejected by the market.

Daniel Hamermesh is Professor of Economics at Royal Holloway, University of London, Research Associate at the National Bureau of Economic Research, and Research Associate at the Institute for the Future of Labor (IZA).

REFERENCES

Daniel S. Hamermesh, 2007. “Viewpoint: Replication in Economics.” Canadian Journal of Economics 40 (3): 715-33.

________________, 2017. “Citations in Economics: Measurement, Impacts and Uses.” Journal of Economic Literature, 55, forthcoming.

Robert M. Whaples. 2006. “The Costs of Critical Commentary in Economics Journals.” Econ Journal Watch 3 (2): 275-82

————————————

[1]To be classified as “inspired” the citing paper had to refer repeatedly to the original paper and/or had to make clear that it was inspired by the methodology of the original work. To be noted as “similar” the citing paper had to use the exact same methodology but on a different data set, while a study classified as a “replication” went further to include at least some of the data in the original study. Thus even a “replication” in many cases involved more than simply re-estimating models in the original article using the same data.

Two faculty at the University of Washington, Carl T. Bergstrom and Jevin West, recently proposed a university course entitled, “Calling Bullshit in the Age of Big Data”. They describe the motivation and aim of the course as follows: “Politicians are unconstrained by facts. Science is conducted by press release. So-called higher education often rewards bullshit over analytic thought. Startup culture has elevated bullshit to high art. Advertisers wink conspiratorially and invite us to join them in seeing through all the bullshit, then take advantage of our lowered guard to bombard us with second-order bullshit. The majority of administrative activity, whether in private business or the public sphere, often seems to be little more than a sophisticated exercise in the combinatorial reassembly of bullshit. We’re sick of it. It’s time to do something, and as educators, one constructive thing we know how to do is to teach people. So, the aim of this course is to help students navigate the bullshit-rich modern environment by identifying bullshit, seeing through it, and combating it with effective analysis and argument.” To see the syllabus for the course, click here.

TRN previously posted about two sessions on replication at the 2017 ASSA meetings. In what may be a sign of changing times, the American Economic Association is sponsoring a third session on this topic. The session, “Meta-Analysis and Reproducibility in Economics Research”, is chaired by Ted Miguel and features research by John Ioannides, Tom Stanley, Chris Doucouliagos, and several SSMART grant recipients associated with the Berkeley Institute for Transparency in the Social Sciences (BITTS). Four papers will be presented:

— How Often Should We Believe Positive Results?

— External Validity in United States Education Research

— Aggregating Distributional Treatment Effects: A Bayesian Hierarchical Analysis of the Microcredit Literature

— Why Economics is Weak and Biased

For more information about the session, including links to download the papers, click here.

[From Dave Giles’ blog Econometrics Beat] The American Statistical Association announced several new initiatives to enhance reproducibility at its flagship journal, the Journal of the American Statistical Association (JASA). In addition to requiring submitters to provide data and code, JASA has created a new associate editor position titled Associate Editor for Reproducibility (AER). The AER will be involved with the review of manuscripts, and will evaluate the “usability of the code and data by others who might seek to reproduce the work.” To read more, click here.

[From the StatTag webpage at Northwestern University] “StatTag is a free software plug-in for conducting reproducible research. It facilitates the creation of dynamic documents using Microsoft Word documents and statistical software, such as Stata. Users can use StatTag to embed statistical output (estimates, tables and figures) into a Word document and then with one click individually or collectively update output with a call to the statistical program. What makes StatTag different from other tools for creating dynamic documents is that it allows for statistical code to be edited directly from Microsoft Word. Using StatTag means that modifications to a dataset or analysis no longer require transcribing or re-copying results into a manuscript or table.” To read a short blog about StatTag, click here.

[From the article “The State of Reproducibility: 16 Advances from 2016”, posted at the website for JoVE, the Journal of Visual Experiments] “2016 saw a tremendous amount of discussion and development on the subject of scientific reproducibility. Were you able to keep up? If not, check out this list of 16 sources from 2016 to get you up to date for the new year!” To read more, click here.

For those interested in replications who happen to be in Chicago in early January, you may find the following two sessions at the ASSA meetings to be of interest:

Session: REPLICATION AND ETHICS IN ECONOMICS: THIRTY YEARS AFTER DEWALD, THURSBY AND ANDERSON

Papers:

— What is Meant by ‘Replication’ and Why Does It Encounter Such Resistance in Economics?

— Replication and Economics Journal Policies

— Replication versus Meta-Analysis in Economics: Where Do We Stand 30 Years After Dewald, Thursby and Anderson?

— Is Economics Research Replicable? Sixty Published Papers From Thirteen Journals Say “Usually Not”

NOTE: Rumour has it that Deward, Thursby and Anderson will all be there. To learn more, click here.

Session: REPLICATION IN MICROECONOMICS

Papers:

— Assessing the Rate of Replication in Economics

— Replications in Development

— What is Replication? The Possibly Exemplary Example of Labor Economics

— A Proposal for Promoting Replications

You must be logged in to post a comment.