Debunking Three Common Claims of Scientific Reform

[Excerpts are taken from the article, “The case for formal methodology in scientific reform” by Berna Devezer, Danielle Navarro, Joachim Vandekerckhove, and Erkan Buzbas, posted at bioRxiv]

“Methodologists have criticized empirical scientists for: (a) prematurely presenting unverified research results as facts; (b) overgeneralizing results to populations beyond the studied population; (c) misusing or abusing statistics; and (d) lack of rigor in the research endeavor that is exacerbated by incentives to publish fast, early, and often. Regrettably, the methodological reform literature is affected by similar practices.”

“In this paper we advocate for the necessity of statistically rigorous and scientifically nuanced arguments to make proper methodological claims in the reform literature. Toward this aim, we evaluate three examples of methodological claims that have been advanced and well-accepted (as implied by the large number of citations) in the reform literature:”

1. “Reproducibility is the cornerstone of, or a demarcation criterion for, science.”

2. “Using data more than once invalidates statistical inference.”

3. “Exploratory research uses “wonky” statistics.”

“Each of these claims suffers from some of the problems outlined earlier and as a result, has contributed to methodological half-truths (or untruths). We evaluate each claim using statistical theory against a broad philosophical and scientific background.”

Claim 1: Reproducibility is the cornerstone of, or a demarcation criterion for, science.

“A common assertion in the methodological reform literature is that reproducibility is a core scientific virtue and should be used as a standard to evaluate the value of research findings…This view implies that if we cannot reproduce findings…we are not practicing science.”

“The focus on reproducibility of empirical findings has been traced back to the influence of falsificationism and the hypothetico-deductive model of science. Philosophical critiques highlight limitations of this model. For example, there can be true results that are by definition not reproducible…science does—rather often, in fact—make claims about non-reproducible phenomena and deems such claims to be true in spite of the non-reproducibility.”

“We argue that even in scientific fields that possess the ability to reproduce their findings in principle, reproducibility cannot be reliably used as a demarcation criterion for science because it is not necessarily a good proxy for the discovery of true regularities. To illustrate this, consider the following two unconditional propositions: (1) reproducible results are true results and (2) non-reproducible results are false results.”

True results are not necessarily reproducible

“Our first proposition is that true results are not always reproducible…for finite sample studies involving uncertainty, the true reproducibility rate must necessarily be smaller than one for any result. This point seems trivial and intuitive. However, it also implies that if the uncertainty in the system is large, true results can have reproducibility close to 0.”

False results might be reproducible

“In well-cited articles in methodological reform literature, high reproducibility of a result is often interpreted as evidence that the result is true…The rationale is that if a result is independently reproduced many times, it must be a true result. This claim is not always true. To see this, it is sufficient to note that the true reproducibility rate of any result depends on the true model and the methods used to investigate the claim.”

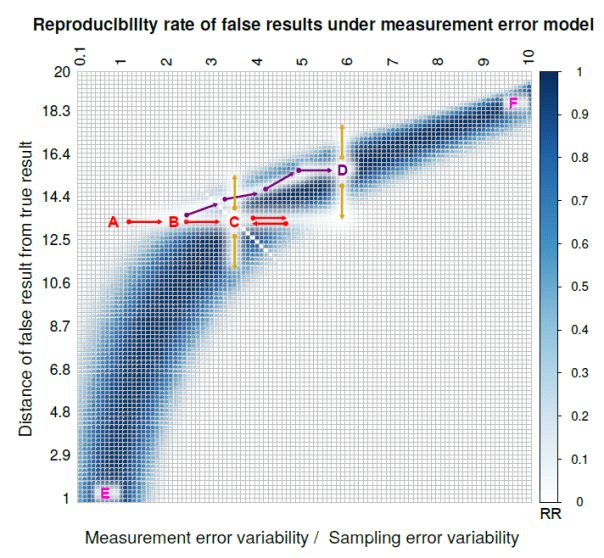

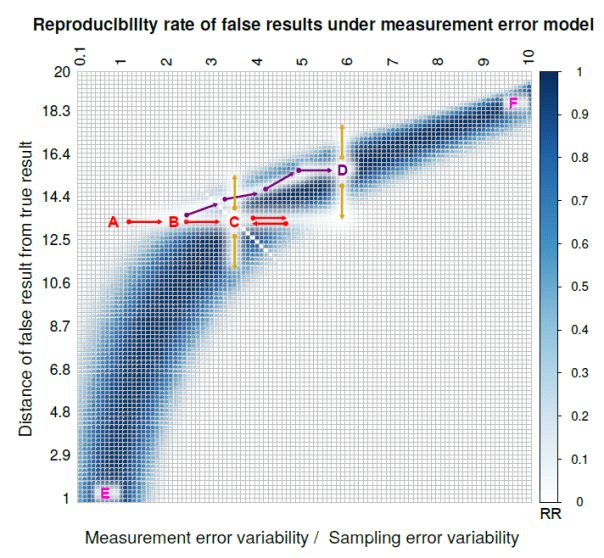

“We consider model misspecification under a measurement error model in simple linear regression…The blue belt in Figure 2 shows that as measurement error variability grows with respect to sampling error variability, effects farther away from the true effect size become perfectly reproducible. At point F in figure Figure 2, the measurement error variability is ten times as large as the sampling error variability, and we have perfect reproducibility of a null effect when the true underlying effect size is in fact large.”

Claim 2: Using data more than once invalidates statistical inference

“A well-known claim in the methodological reform literature regards the (in)validity of using data more than once, which is sometimes colloquially referred to as double-dipping or data peeking…This rationale has been used in reform literature to establish the necessity of preregistration for “confirmatory” statistical inference. In this section, we provide examples to show that it is incorrect to make these claims in overly general terms.”

“The key to validity is not how many times the data are used, but appropriate application of the correct conditioning…”

“Figure 4 provides an example of how conditioning can be used to ensure that nominal error rates are achieved. We aim to test whether the mean of Population 1 is greater than the mean of Population 2, where both populations are normally distributed with known variances. An appropriate test is an upper-tail two-sample z-test. For a desired level of test, we fix the critical value at z, and the test is performed without performing any prior analysis on the data. The sum of the dark green and dark red areas under the black curve is the nominal Type I error rate for this test.”

“Now, imagine that we perform some prior analysis on the data and use it only if it obeys an exogenous criterion: We do not perform our test unless “the mean of the sample from Population 1 is larger than the mean of the sample from Population 2.” This is an example of us deriving our alternative hypothesis from the data. The test can still be made valid, but proper conditioning is required.”

“If we do not condition on the information given within double quotes and we still use z as the critical value, we have inflated the observed Type I error rate by the sum of the light green and light red areas because the distribution of the test statistic is now given by the red curve. We can, however, adjust the critical value from z to z* such that the sum of the light and dark red areas is equal to the nominal Type I error rate, and the conditional test will be valid.”

“We have shown that using data multiple times per se does not present a statistical problem. The problem arises if proper conditioning on prior information or decisions is skipped.”

Claim 3: Exploratory Research Uses “Wonky” Statistics

“A large body of reform literature advances the exploratory-confirmatory research dichotomy from an exclusively statistical perspective. Wagenmakers et al. (2012) argue that purely exploratory research is one that finds hypotheses in the data by post-hoc theorizing and using inferential statistics in a “wonky” manner where p-values and error rates lose their meaning: ‘In the grey area of exploration, data are tortured to some extent, and the corresponding statistics is somewhat wonky.’”

“Whichever method is selected for EDA [exploratory data analysis]; …it needs to be implemented rigorously to maximize the probability of true discoveries while minimizing the probability of false discoveries….repeatedly misusing statistical methods, it is possible to generate an infinite number of patterns from the same data set but most of them will be what Good (1983, p.290) calls a kinkus—‘a pattern that has an extremely small prior probability of being potentially explicable, given the particular context’.”

“The above discussion should make two points clear, regarding Claim 3: First, exploratory research cannot be reduced to exploratory data analysis and thereby to the absence of a preregistered data analysis plan, and second, when exploratory data analysis is used for scientific exploration, it needs rigor. Describing exploratory research as though it were synonymous with or accepting of “wonky” procedures that misuse or abuse statistical inference not only undermines the importance of systematic exploration in the scientific process but also severely handicaps the process of discovery.”

Conclusion

“Simple fixes to complex scientific problems rarely exist. Simple fixes motivated by speculative arguments, lacking rigor and proper scientific support might appear to be legitimate and satisfactory in the short run, but may prove to be counter-productive in the long run.”

To read the article, click here.

You must be logged in to post a comment.