Redefining RSS

[From the blog “Justify Your Alpha by Decreasing Alpha Levels as a Function of the Sample Size” by Daniël Lakens, posted at The 20% Statistician]

“Testing whether observed data should surprise us, under the assumption that some model of the data is true, is a widely used procedure in psychological science. Tests against a null model, or against the smallest effect size of interest for an equivalence test, can guide your decisions to continue or abandon research lines. Seeing whether a p-value is smaller than an alpha level is rarely the only thing you want to do, but especially early on in experimental research lines where you can randomly assign participants to conditions, it can be a useful thing. Regrettably, this procedure is performed rather mindlessly.”

“…Here I want to discuss one of the least known, but easiest suggestions on how to justify alpha levels in the literature, proposed by Good. The idea is simple, and has been supported by many statisticians in the last 80 years: Lower the alpha level as a function of your sample size.”

“Leamer (you can download his book for free) correctly notes that this behavior, an alpha level that is a decreasing function of the sample size, makes sense from both a Bayesian as a Neyman-Pearson perspective.”

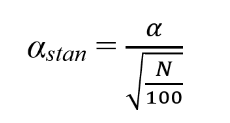

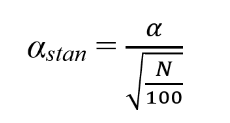

“So instead of an alpha level of 0.05, we can think of a standardized alpha level:”

“…with 100 participants α and αstan are the same, but as the sample size increases above 100, the alpha level becomes smaller. For example, a α = .05 observed in a sample size of 500 would have a αstan of 0.02236.”

You must be logged in to post a comment.