What Do Replications Tell Us About the Reliability of Meta-Analyses? Evidence from Psychology

[Excerpts taken from the article “Comparing meta-analyses and preregistered multiple-laboratory replication projects” by Amanda Kvarven, Eirik Strømland, and Magnus Johannesson, published in Nature Human Behaviour]

“In the past 30 years, the number of meta-analyses published across scientific fields has been growing exponentially and some scholars have called for greater reliance on ‘meta-analytic thinking’ in the behavioural sciences.”

“However, the properties of a meta-analysis depend on the primary studies that it includes; if primary studies overestimate effect sizes in the same direction, so too will the meta-analysis.”

“Our approach is to use large-scale registered replication studies in psychology carried out at multiple laboratories…as a baseline to which the results of meta-analyses on the same topics will be compared.”

“We started by collecting data on studies in psychology where many different laboratories joined forces to replicate a well-known effect according to a pre-analysis plan.”

“After identification of relevant replication experiments, we searched for meta-analyses on the same research question.”

“…our final dataset spans 15 preregistered replication studies using a multiple-laboratory format…and 15 corresponding meta-analyses on the same research question…”

“We compared the meta-analytic to the replication effect size for each effect using a z-test.”

“All our statistical tests are two-tailed and follow the recent recommendation to refer to tests with P < 0.005 as statistically significant and P = 0.05 as suggestive evidence against the null.”

“As seen in Fig. 2a, the meta-analysis and replication studies reach the same conclusion about the direction of the effect using the 0.005 statistical significance criterion for seven (47%) study pairs…”

“For seven (47%) study pairs, the meta-analysis finds a significant effect in the original direction whereas the replication cannot reject the null hypothesis…”

“…in the remaining study pair, the meta-analysis cannot reject the null hypothesis whereas the replication study finds a significant effect in the opposite direction to the original study.”

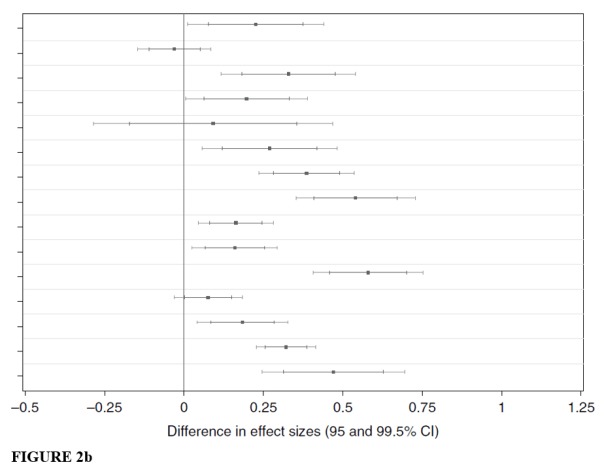

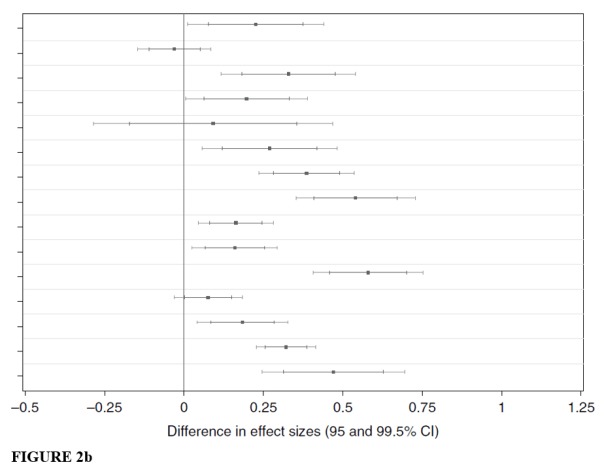

“In Fig. 2b we can see that the difference in estimated effect size is significant for 12 (80%) of the studies, and there is suggestive evidence of a difference for one additional study. For all 12 studies, the effect size is higher in the meta-analysis.”

“A central caveat in interpreting our findings is the potential impact of heterogeneity in the meta-analyses in our sample.”

“We find no evidence of our findings being explained by heterogeneity in meta-analysis and replicator selection but, at the same time, we cannot rule out that replicator selection has affected our results. Our results should therefore be interpreted cautiously, and further work on heterogeneity and replicator selection is important.”

“In a previous related study in the field of medicine, 12 large randomized, controlled trials published in four leading medical journals were compared to 19 meta-analyses published previously on the same topics.”

“They did not provide any results for the pooled overall difference between meta-analyses and large clinical trials, but from graphical inspection of the results there does not appear to be a sizeable systematic difference.”

“This difference in results between psychology and medicine could reflect a genuine difference between those fields, but it could also reflect the fact that even large clinical trials in medicine are subject to selective reporting or publication bias.”

“An important difference between medicine and psychology is also the requirement of the former to register randomized controlled trials, which may diminish the biases of published studies; no such requirement exists in psychology.”

“A potentially effective policy for reducing publication bias and selective reporting is preregistration of analysis plans before data collection, an increasing trend in psychology. This has the potential to increase the credibility of both the original studies and meta-analyses, rendering the latter a more valuable tool for aggregation of research results.”

To read the article, click here (NOTE: Article is behind a paywall).

You must be logged in to post a comment.