Recently, Paul Smaldino and Richard McElreath published the results of a computer simulation where scientific research is governed by “laws” of natural selection based on publishing “success.” Their finding that perverse incentives can cause “bad science” to push out good science has received much attention (see, for example, here and here).

In a related article, “Academic Research in the 21st Century: Maintaining Scientific Integrity in a Climate of Perverse Incentives and Hypercompetition”, MARC EDWARDS and SIDDHARTHA ROY, professors of civil engineering at Virginia Tech University, argue that a tipping point can be reached where competition for research funding causes unethical behaviour to drive scientific integrity — and scientists with integrity — out of research.

The article is noteworthy for a number of reasons, not the least of which is a table that aggregates a number of “Perverse Incentives in Academia”. It’s also noteworthy for this statement: “…a tipping point is possible in which the scientific enterprise itself becomes inherently corrupt and public trust is lost, risking a new dark age with devastating consequences to humanity.” To read the article, click here.

[From the article “Why is so much research dodgy? Blame the Research Excellence Framework” in The Guardian] “In the UK, the Ref [Research Excellence Framework] ranks the published works of researchers according to their originality (how novel is the research?), significance (does it have practical or commercial importance?), and rigour (is the research technically sound?). Outputs are then awarded one to four stars….The Ref completely undermines our efforts to produce a reliable body of knowledge. Why? The focus on originality and accessibility – publications exploring new areas of research using new paradigms, and avoiding testing well-established theories – is the exact opposite of what science needs to be doing to solve the troubling replication crisis. According to Ref standards, replicating an already published piece of work is simply uninteresting.” To read more, click here.

My Dear Fellow Scientists!

“If you torture the data long enough, it will confess.”

This aphorism, attributed to Ronald Coase, sometimes has been used in a dis-respective manner, as if it was wrong to do creative data analysis.

In fact, the art of creative data analysis has experienced despicable attacks over the last years. A small but annoyingly persistent group of second-stringers tries to denigrate our scientific achievements. They drag science through the mire.

These people propagate stupid method repetitions (also know as “direct replications”); and what was once one of the supreme disciplines of scientific investigation – a creative data analysis of a data set – has been crippled to conducting an empty-headed step-by-step pre-registered analysis plan. (Come on: If I lay out the full analysis plan in a pre-registration, even an undergrad student can do the final analysis, right? Is that really the high-level scientific work we were trained for so hard?).

They broadcast in an annoying frequency that p-hacking leads to more significant results, and that researchers who use p-hacking have higher chances of getting things published.

What are the consequence of these findings? The answer is clear. Everybody should be equipped with these powerful tools of research enhancement!

The Art of Creative Data Analysis

Some researchers describe a performance-oriented data analysis as “data-dependent analysis”. We go one step further, and call this technique data-optimal analysis (DOA), as our goal is to produce the optimal, most significant outcome from a data set.

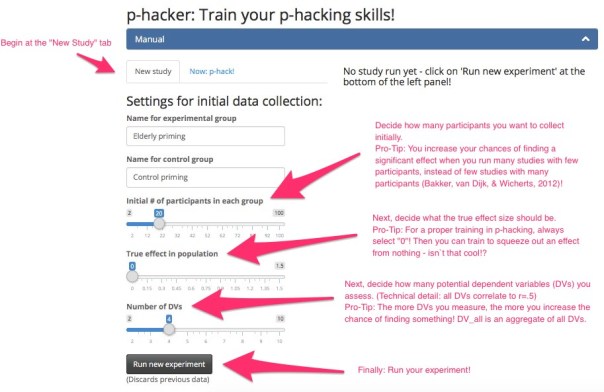

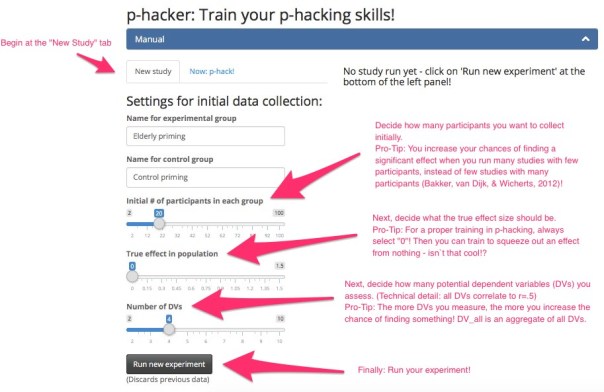

I developed an online app that allows to practice creative data analysis and how to polish your p-values. It’s primarily aimed at young researchers who do not have our level of expertise yet, but I guess even old hands might learn one or two new tricks! It’s called “The p-hacker” (please note that ‘hacker’ is meant in a very positive way here. You should think of the cool hackers who fight for world peace). You can use the app in teaching, or to practice p-hacking yourself.

Please test the app, and give me feedback! You can also send it to colleagues: http://shinyapps.org/apps/p-hacker

The full R code for this Shiny app is on Github.

Train Your p-Hacking Skills: Introducing the p-Hacker App

Here’s a quick walk-through of the app. Please see also the quick manual at the top of the app for more details.

First, you have to run an initial study in the “New study” tab:

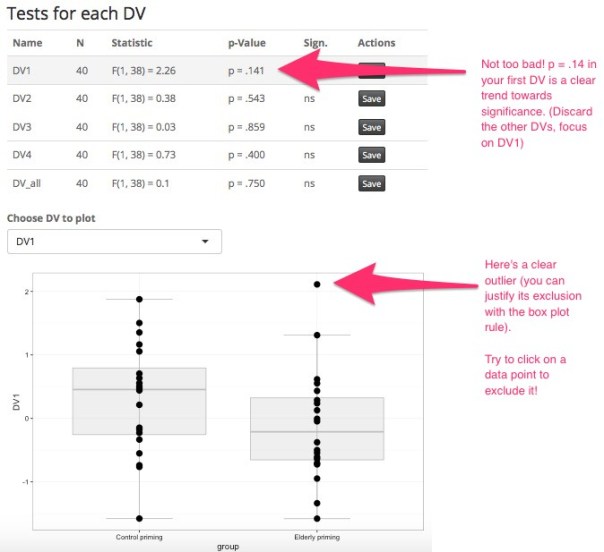

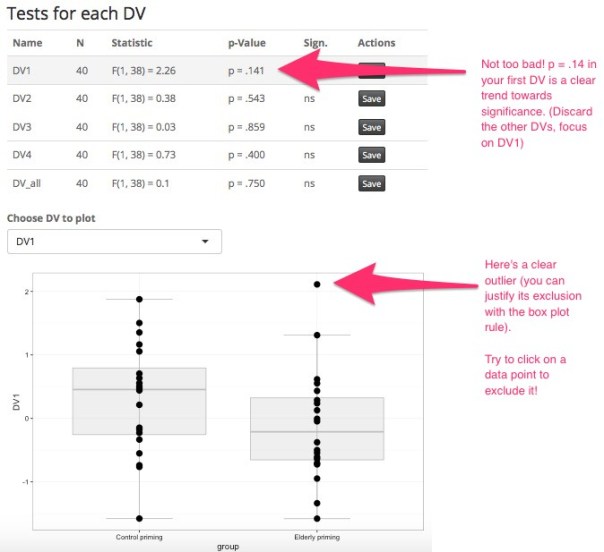

When you ran your first study, inspect the results in the middle pane. Let’s take a look at our results, which are quite promising:

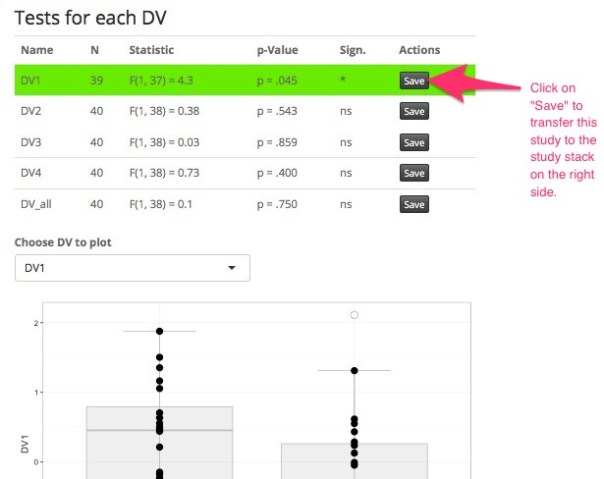

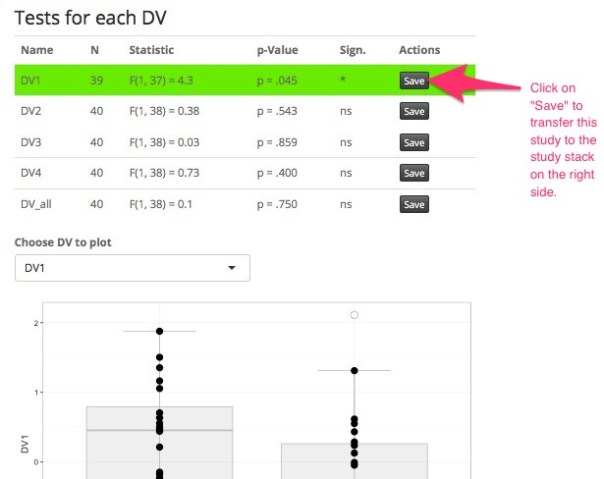

After exclusion of this obvious outlier, your first study is already a success! Click on “Save” next to your significant result to save the study to your study stack on the right panel:

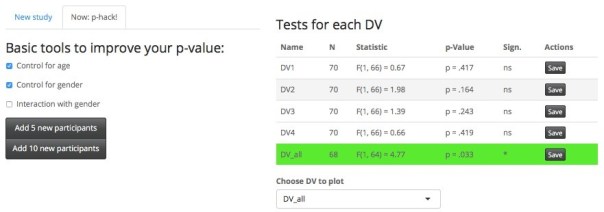

Sometimes outlier exclusion is not enough to improve your result.

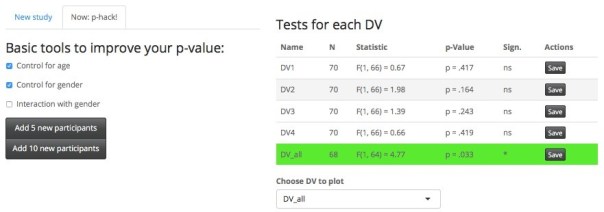

Now comes the magic. Click on the “Now: p-hack!” tab – this gives you all the great tools to improve your current study. Here you can fully utilize your data analytic skills and creativity.

In the following example, we could not get a significant result by outlier exclusion alone. But after adding 10 participants (in two batches of 5), controlling for age and gender, and focusing on the variable that worked best – voilà!

Do you see how easy it is to craft a significant study?

Now it is important to show even more productivity: Go for the next conceptual replication (i.e., go back to Step 1 and collect a new sample, with a new manipulation and a new DV). Whenever your study reached significance, click on the Save button next to each DV and the study is saved to your stack, awaiting some additional conceptual replications that show the robustness of the effect.

Many journals require multiple studies. Four to six studies should make a compelling case for your subtile, counterintuitive, and shocking effects:

Honor to whom honor is due: Find the best outlet for your achievements!

My friends, let’s stand together and Make Science Great Again! I really hope that the p-hacker app can play its part in bringing science back to its old days of glory.

(A quick side note: Some so-called „data-detectives“ developed several methods for detecting p-hacking. This is a cowardly attack on creative data analyses. If you want to take a look at these detection tools, check out the p-checker app. You can also transfer your p-hacked results to the p-checker app with a single click on the button ‘Send to p-checker’ below your study stack on the right side).

Best regards,

Ned Bicare, PhD

Providing access to the data is a prerequisite for replication of empirical analysis. Unfortunately, this access is not always granted to everyone (see here, and here). There is evidence that some of this may be due to concerns about requestors’ qualifications (see here).

In two recent papers, we investigated how willingness to share depended on the identity of the requestor. In a paper entitled, “(Un)available upon request: field experiment on researchers’ willingness to share supplementary materials”, Ernesto Reuben and I found that only 44% of economists were willing to share supplementary research materials of a published study they had promised to send “upon request”. This number was slightly higher if the request came from a high prestigious university rather than one that was less prestigious.

In “Gender, beauty and support networks in academia: evidence from a field experiment (Study 1)”, Magdalena Smyk and I asked experimental economists to share data they used in their published paper. The focus in this experiment was the role of gender: half the requests were sent by a female student, the other half by a male. Overall compliance rate was 34.7%, with no gender effect.

The fact that requestor’s identity matters little is good news of course, as it suggests a roughly level playing field. However, while compliance rates in economics are higher than many other disciplines (see here and here and here), there is still much room for improvement.

Michal Krawczyk is an Assistant Professor of Economic Sciences at the University of Warsaw, Poland.

REFERENCES

Krawczyk, M., & Reuben, E. (2012). (Un) Available upon Request: Field Experiment on Researchers’ Willingness to Share Supplementary Materials.Accountability in research, 19(3), 175-186.

Krawczyk, M., & Smyk, M. (2015). Gender, beauty and support networks in academia: evidence from a field experiment (No. 2015-43).

Recently, another sensational study from social psychology came under renewed criticism. The study, “Power Posing: Brief Nonverbal Displays Affect Neuroendocrine Levels and Risk Tolerance” , published in Psychological Science in 2010 by Dana Carney, Amy Cuddy, and Andy Yap claimed that adopting a “power pose” caused biological and psychological changes that made a person “more powerful”, in both fact and feeling. What makes this case particularly interesting is that this time, the dissenting opinion came from none other than the lead author of the study, Dana Carney. How Carney came around to change her mind about the reliability of her findings is quite interesting, and dare we say, hopeful? Replication played a role, as did increased awareness of good research practices. To read more, click here.

In a recent blog at Statistical Modeling, Causal Inference, and Social Science, ANDREW GELMAN asks the question: “Why is so much of the discussion about psychology research? Why not economics, which is more controversial and gets more space in the news media? Or medicine, which has higher stakes and a regular flow of well-publicized scandals?” He then goes on to give 5 reasons. (1) Psychology has a history of treating measurement issues in a more sophisticated manner than many other fields. (2) Psychology has been particularly prone to false confidence in experimental design. (3) Psychology is more open than other disciplines. In particular, Gelman writes that psychology is more open than economics, “which is notoriously resistant to ideas coming from other fields.” (4) The replication crisis has touched the work of top-tier academics. (5) Psychology attracts a lot of popular attention and dazzling claims are not immediately challenged by researchers on the other side of the ideological divide, as they are in economics. He concludes by saying, “The strengths and weaknesses of the field of research psychology seemed to have combined to (a) encourage the publication and dissemination of lots of low-quality, unreplicable research, while (b) creating the conditions for this problem to be recognized, exposed, and discussed openly.” To read more, click here.

The problems of publication misconduct – manipulation, fabrication and plagiarism – and other dodgy practices such as salami-style publications are attracting increasing attention. In the newly published paper “Misconduct, Marginality, and Editorial Practices in Management, Business, and Economics” (full text available here), we present findings on these problems in MBE-journals and the diffusion of editorial practices to combat them (Karabag and Berggren, 2016).

The data were collected by bibliometric searches of retracted papers in the seven major databases that cover almost all MBE journals and from two surveys to editors of these journals; the first to 60 journals with at least one public retraction, then to all journals investigated in the bibliometric study. A total of 298 journals editors answered the second survey.

The bibliometric study identified a strongly increasing trend of retraction, from 1 retraction in 2005 to 60 in 2015. Compared to the number of published papers, the figure is still very small, but since there are strong disincentives for editors to engage in retractions, the reported number is only the tip of the misconduct iceberg. As for the problem of marginality, more than half of the editors reported experiences of salami publications, which can be defined as the slicing of output into the smallest publishable unit.

The survey also enquired about specific practices to deal with these problems. We found that 42% of the journals have started to use software to detect possible plagiarism, while 30% ask authors to provide a data file. Only 6% ask the author(s) to provide information on each author’s specific roles. Many editors stated that they rely on the reviewers to detect and prevent misconduct. Despite this importance of the reviewers, less than 40% of the journals have public rewards for good reviewers, and less than half add good reviewers to the advisory board.

Only 10% of the journals publish replication studies, and the exact meaning of these answers may be doubted, in view of other studies which show a much lower percentage publishing replications. According to Duvendack et al. 2015, for example, only 3% of the studied journals actually do publish replication papers.

The discrepancy between the findings may be explained by social desirability. Editors might think or believe that it is socially desirable to publish replication studies and/or they may plan to publish them. Our survey had a free space for comments, but the editors did not comment on replication studies. Our interpretation is that editors are still not particularly interested in replication studies, even though many affirm the theoretical importance of replication for building theory and to disclose manipulation.

The paper also presents positive ideas to combat marginality by means of supporting more creative contributions. 98 journal editors provided a rich menu of ideas. We classified them into 14 sub-themes, grouped under four major themes. The first major theme, “editorial vision and engaged board”, included suggestions that editors should open up the journal and take risks, be visionary, have engaged editorial board members and change the editorial teams regularly. The second theme, “curate papers and connect authors,” comprised three sub-themes: curating and developing manuscripts, connecting authors, and constructive screening. The third major theme, labeled “open up the discussion” contained suggestions such as publish criticism instead of rejecting the papers, invite comments and involve industry specialists. The fourth theme, “go beyond the mainstream,” involved ideas on mixing disciplines, limiting individual authors and avoiding perfection.

Overall the findings indicate a broad editorial engagement with misconduct and marginality; however, we remain concerned with the amount of undetected misconduct. Several authors have argued that there is a knowledge gap in MBE due to the small number of replications and papers testing previously presented models (c.f. Duvendack et al., 2015; Kacmar and Whitfield, 2000).

Two recent cases show how valuable replication studies are. In 2010, Reinhart and Rogoff, based on a comparative sample of countries, alleged that public debt beyond a very specific level would stifle growth during periods of crisis. This conclusion was widely cited both within and outside the academic community, but when a graduate student at the University of Massachusett Amherst tried to replicate the study, he uncovered serious data flaws in the original paper which undermined both its conclusions and the theoretical assumption. The critical study was published (Herndon et al., 2014), but not in the American Economic Review, which had carried the original paper.

In the management field, Lepore (2014) re-studied the famous disk drive industry cases in Christensen´s (1997) theory on disruptive innovation. Extending the time period, the re-study found a very different pattern to the one suggested by Christensen. This finding calls for a re-assessment of the theoretical framework of disruptive innovation (Bergek et al., 2013).

The crucial point here is that none of these important and critical replication papers were published in the journals which published the original papers, and nor did these journals actively encourage submission of other replication studies. Public retractions of published papers will always be a means of last resort for a journal. Therefore it is so important to encourage close reading, reflection and replications!

REFERENCES

Bergek, A., Berggren, C., Magnusson, T. and Hobday, M., 2013. Technological discontinuities and the challenge for incumbent firms: Destruction, disruption or creative accumulation? Research Policy, 42(6), pp.1210-1224.

Christensen C. M. 1997. The innovator’s dilemma: When new technologies cause great firms to fail, Boston: Harvard Business School Press.

Duvendack, M., Palmer-Jones, R.W. and Reed, W.R., 2015. Replications in Economics: A progress report. Econ Journal Watch, 12(2), pp.164-191.

Herndon, T., Ash, M. and Pollin, R., 2014. Does high public debt consistently stifle economic growth? A critique of Reinhart and Rogoff. Cambridge Journal of Economics, 38,, pp. 257-279.

Kacmar, K.M. and Whitfield, J.M., 2000. An additional rating method for journal articles in the field of management. Organizational Research Methods, 3(4), pp.392-406.

Karabag, S. F., & Berggren, C. 2016. Misconduct, Marginality and Editorial Practices in Management, Business and Economics Journals. PloS One, 11(7), pp. 1-25, e0159492. DOI: http://dx.doi.org/10.1371/journal.pone.0159492

Reinhart, C. M., and Rogoff, K.S. 2010. Growth in a Time of Debt. American Economic Review, 100, pp. 573-578.

ANDREW GELMAN has a new blog in which he takes on SUSAN FISKE and her labelling of (some) social media critics of psychological research as “methodological terrorists.” The blog is a must read for at least two reasons. First, Gelman gives a fairly detailed history of key papers and events that have led to the current loss of confidence in published research. Second, Fiske’s article makes it clear that there is a methodological battle going on in psychology (as well as elsewhere). While she doesn’t target anyone in particular, by implication her shots fall on a large division of researchers. What Fiske never acknowledges is that the reason social media has played such an important part in this struggle is because the traditional venue of academic discourse — conferences and journals — had been largely shut out to critics. Social media has allowed the critics of the status quo to achieve impressive gains. Fiske attempts to repulse this offensive. Gelman makes it clear that the critics will not fall away upon taking fire. The battle will be joined. To read more, click here.

[From the article “Cut-throat academia leads to natural selection of bad science, claims study”, which reports on a scientific study by authors Paul Smaldino and Richard McElreath ] “Sociology, economics, climate science and ecology are other areas likely to be vulnerable to the propagation of bad practice, according to Smaldino. “My impression is that, to some extent, the combination of studying very complex systems with a dearth of formal mathematical theory creates good conditions for low reproducibility,” he said. “This doesn’t require anyone to actively game the system or violate any ethical standards. Competition for limited resources – in this case jobs and funding – will do all the work.” Drawing parallels with Darwin’s classic theory of evolution, Smaldino claims that various forms of bad scientific practice flourish in the academic world, much like hardy germs that thwart extermination in real life. To read more, click here.

[From the article “The Solution to Science’s Replication Crisis” by BRUCE KNUTSEN, posted at arxiv.org] “The solution to science’s replication crisis is a new ecosystem in which scientists sell what they learn from their research. In each pairwise transaction, the information seller makes (loses) money if he turns out to be correct (incorrect). Responsibility for the determination of correctness is delegated, with appropriate incentives, to the information purchaser. Each transaction is brokered by a central exchange, which holds money from the anonymous information buyer and anonymous information seller in escrow, and which enforces a set of incentives facilitating the transfer of useful, bluntly honest information from the seller to the buyer. This new ecosystem, capitalist science, directly addresses socialist science’s replication crisis by explicitly rewarding accuracy and penalizing inaccuracy.” To read more, click here.

You must be logged in to post a comment.